Showing archive for: “Common Law”

No More Kings? Due Process and Regulation Without Representation Under the UK Competition Bill

What should a competition law for 21st century look like? This point is debated across many jurisdictions. The Digital Markets, Competition, and Consumers Bill (DMCC) would change UK competition law’s approach to large platforms. The bill’s core point is to place the UK Competition and Markets Authority’s (CMA) Digital Markets Unit (DMU) on a statutory footing with ... No More Kings? Due Process and Regulation Without Representation Under the UK Competition Bill

Twitter v. Taamneh: Intermediary Liability, The First Amendment, and Section 230

After the oral arguments in Twitter v. Taamneh, Geoffrey Manne, Kristian Stout, and I spilled a lot of ink thinking through the law & economics of intermediary liability and how to draw lines when it comes to social-media companies’ responsibility to prevent online harms stemming from illegal conduct on their platforms. With the Supreme Court’s recent decision in Twitter v. Taamneh, ... Twitter v. Taamneh: Intermediary Liability, The First Amendment, and Section 230

UK Poised to Begin Realizing Brexit’s Regulatory-Reform Potential

The United Kingdom’s 2016 “Brexit” decision to leave the European Union created the opportunity for the elimination of unwarranted and excessive EU regulations that had constrained UK economic growth and efficiency. Recognizing that fact, former Prime Minister Boris Johnson launched the Task Force on Innovation, Growth, and Regulatory Reform, whose May 2021 report recommended “a new regulatory ... UK Poised to Begin Realizing Brexit’s Regulatory-Reform Potential

The Law & Economics of Children’s Online Safety: The First Amendment and Online Intermediary Liability

Legislation to secure children’s safety online is all the rage right now, not only on Capitol Hill, but in state legislatures across the country. One of the favored approaches is to impose on platforms a duty of care to protect teen users. For example, Sens. Richard Blumenthal (D-Conn.) and Marsha Blackburn (R-Tenn.) have reintroduced the Kid’s ... The Law & Economics of Children’s Online Safety: The First Amendment and Online Intermediary Liability

No, Mergers Are Not Like ‘The Ultimate Cartel’

There is a line of thinking according to which, without merger-control rules, antitrust law is “incomplete.”[1] Without such a regime, the argument goes, whenever a group of companies faces with the risk of being penalized for cartelizing, they could instead merge and thus “raise prices without any legal consequences.”[2] A few months ago, at a ... No, Mergers Are Not Like ‘The Ultimate Cartel’

No, Chevron Deference Will Not Save the FTC’s Noncompete Ban

The Federal Trade Commission (FTC) announced in a notice of proposed rulemaking (NPRM) last month that it intends to ban most noncompete agreements. Is that a good idea? As a matter of policy, the question is debatable. So far as the NPRM is concerned, however, that debate is largely hypothetical. It is unlikely that any ... No, Chevron Deference Will Not Save the FTC’s Noncompete Ban

FTC UMC Roundup – Circular Firing Squad Edition

Welcome to the FTC UMC Roundup for June 17, 2022. This week’s roundup is a bit shorter – but only because your narrator would rather be out climbing mountains in Squamish, BC, than reading or writing about Sen. Amy Klobuchar’s (D-MN) pretty bad week. From where I sit, me climbing a multipitch 5.13 mountain looks ... FTC UMC Roundup – Circular Firing Squad Edition

NEW VOICES: FTC Rulemaking for Noncompetes

On July 9, 2021, President Joe Biden issued an executive order asking the Federal Trade Commission (FTC) to “curtail the unfair use of noncompete clauses and other clauses or agreements that may unfairly limit worker mobility.” This executive order raises two questions. First, does the FTC have the authority to issue such a rule? And ... NEW VOICES: FTC Rulemaking for Noncompetes

Chevron and Administrative Antitrust, Redux

[Wrapping up the first week of our FTC UMC Rulemaking symposium is a post from Truth on the Market’s own Justin (Gus) Hurwitz, director of law & economics programs at the International Center for Law & Economics and an assistant professor of law and co-director of the Space, Cyber, and Telecom Law program at the ... Chevron and Administrative Antitrust, Redux

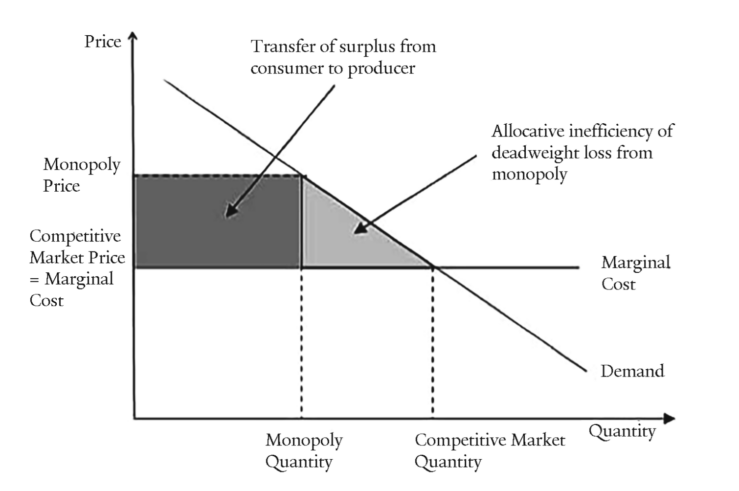

Toward a Dynamic Consumer Welfare Standard for Contemporary U.S. Antitrust Enforcement

For decades, consumer-welfare enhancement appeared to be a key enforcement goal of competition policy (antitrust, in the U.S. usage) in most jurisdictions: The U.S. Supreme Court famously proclaimed American antitrust law to be a “consumer welfare prescription” in Reiter v. Sonotone Corp. (1979). A study by the current adviser to the European Competition Commission’s chief ... Toward a Dynamic Consumer Welfare Standard for Contemporary U.S. Antitrust Enforcement

Unpacking the Flawed 2021 Draft USPTO, NIST, & DOJ Policy Statement on Standard-Essential Patents (SEPs)

Responding to a new draft policy statement from the U.S. Patent & Trademark Office (USPTO), the National Institute of Standards and Technology (NIST), and the U.S. Department of Justice, Antitrust Division (DOJ) regarding remedies for infringement of standard-essential patents (SEPs), a group of 19 distinguished law, economics, and business scholars convened by the International Center ... Unpacking the Flawed 2021 Draft USPTO, NIST, & DOJ Policy Statement on Standard-Essential Patents (SEPs)

Fleites v. MindGeek Contemplates Significant Expansion of Collateral Liability

In Fleites v. MindGeek—currently before the U.S. District Court for the District of Central California, Southern Division—plaintiffs seek to hold MindGeek subsidiary PornHub liable for alleged instances of human trafficking under the Racketeer Influenced and Corrupt Organizations (RICO) and the Trafficking Victims Protection Reauthorization Act (TVPRA). Writing for the International Center for Law & Economics ... Fleites v. MindGeek Contemplates Significant Expansion of Collateral Liability