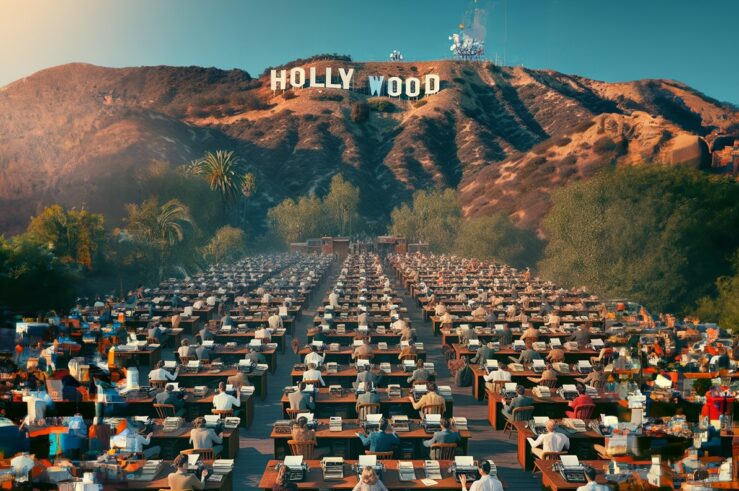

Not only have digital-image generators like Stable Diffusion, DALL-E, and Midjourney—which make use of deep-learning models and other artificial-intelligence (AI) systems—created some incredible (and sometimes creepy – see above) visual art, but they’ve engendered a good deal of controversy, as well. Human artists have banded together as part of a fledgling anti-AI campaign; lawsuits have been filed; and policy experts have been trying to think through how these machine-learning systems interact with various facets of the law.

Debates about the future of AI have particular salience for intellectual-property rights. Copyright is notoriously difficult to protect online, and these expert systems add an additional wrinkle: it can at least argued that their outputs can be unique creations. There are also, of course, moral and philosophical objections to those arguments, with many grounded in the supposition that only a human (or something with a brain, like humans) can be creative.

Leaving aside for the moment a potentially pitched battle over the definition of “creation,” we should be able to find consensus that at least some of these systems produce unique outputs and are not merely cutting and pasting other pieces of visual imagery into a new whole. That is, at some level, the machines are engaging in a rudimentary sort of “learning” about how humans arrange colors and lines when generating images of certain subjects. The machines then reconstruct this process and produce a new set of lines and colors that conform to the patterns they found in the human art.

But that isn’t the end of the story. Even if some of these systems’ outputs are unique and noninfringing, the way the machines learn—by ingesting existing artwork—can raise a number of thorny issues. Indeed, these systems are arguably infringing copyright during the learning phase, and such use may not survive a fair-use analysis.

We are still in the early days of thinking through how this new technology maps onto the law. Answers will inevitably come, but for now, there are some very interesting questions about the intellectual-property implications of AI-generated art, which I consider below.

The Points of Collision Between Intellectual Property Law and AI-Generated Art

AI-generated art is not a single thing. It is, rather, a collection of differing processes, each with different implications for the law. For the purposes of this post, I am going to deal with image-generation systems that use “generated adversarial networks” (GANs) and diffusion models. The various implementations of each will differ in some respects, but from what I understand, the ways that these techniques can be used generate all sorts of media are sufficiently similar that we can begin to sketch out some of their legal implications.

A (very) brief technical description

This is a very high-level overview of how these systems work; for a more detailed (but very readable) description, see here.

A GAN is a type of machine-learning model that consists of two parts: a generator and a discriminator. The generator is trained to create new images that look like they come from a particular dataset, while the discriminator is trained to distinguish the generated images from real images in the dataset. The two parts are trained together in an adversarial manner, with the generator trying to produce images that can fool the discriminator and the discriminator trying to correctly identify the generated images.

A diffusion model, by contrast, analyzes the distribution of information in an image, as noise is progressively added to it. This kind of algorithm analyzes characteristics of sample images—like the distribution of colors or lines—in order to “understand” what counts as an accurate representation of a subject (i.e., what makes a picture of a cat look like a cat and not like a dog).

For example, in the generation phase, systems like Stable Diffusion start with randomly generated noise, and work backward in “denoising” steps to essentially “see” shapes:

The sampled noise is predicted so that if we subtract it from the image, we get an image that’s closer to the images the model was trained on (not the exact images themselves, but the distribution – the world of pixel arrangements where the sky is usually blue and above the ground, people have two eyes, cats look a certain way – pointy ears and clearly unimpressed).

It is relevant here that, once networks using these techniques are trained, they do not need to rely on saved copies of the training images in order to generate new images. Of course, it’s possible that some implementations might be designed in a way that does save copies of those images, but for the purposes of this post, I will assume we are talking about systems that save known works only during the training phase. The models that are produced during training are, in essence, instructions to a different piece of software about how to start with a text prompt from a user—a palette of pure noise—and progressively “discover” signal in that image until some new image emerges.

Input-stage use of intellectual property

The creators of OpenAI, one of the most popular AI tools, are not shy about their use of protected works in the training phase of AI algorithms. In comments to the U.S. Patent and Trademark Office (PTO), they note that:

…[m]odern AI systems require large amounts of data. For certain tasks, that data is derived from existing publicly accessible “corpora”… of data that include copyrighted works. By analyzing large corpora (which necessarily involves first making copies of the data to be analyzed), AI systems can learn patterns inherent in human-generated data and then use those patterns to synthesize similar data which yield increasingly compelling novel media in modalities as diverse as text, image, and audio. (emphasis added).

Thus, at the training stage, the most popular forms of machine-learning systems require making copies of existing works. And where the material being used is either not in the public domain or is not licensed, an infringement occurs (as Getty Images notes in a suit against Stability AI that it recently filed). Thus, some affirmative defense is needed to excuse the infringement.

Toward this end, OpenAI believes that its algorithmic training should qualify as a fair use. Other major services that use these AI techniques to “learn” from existing media would likely make similar arguments. But, at least in the way that OpenAI has framed the fair-use analysis (that these uses are sufficiently “transformative”), it’s not clear that they should qualify.

The purpose and character of the use

In brief, fair use—found in 17 USC § 107—provides for an affirmative defense against infringement when the use is “for purposes such as criticism, comment, news reporting, teaching…, scholarship, or research.” When weighing a fair-use defense, a court must balance a number of factors:

- the purpose and character of the use, including whether such use is of a commercial nature or is for nonprofit educational purposes;

- the nature of the copyrighted work;

- the amount and substantiality of the portion used in relation to the copyrighted work as a whole; and

- the effect of the use upon the potential market for or value of the copyrighted work.

OpenAI’s fair-use claim is rooted in the first factor: the nature and character of the use. I should note, then, that what follows is solely a consideration of Factor 1, with special attention paid to whether these uses are “transformative.” But it is important to stipulate fair-use analysis is a multi-factor test and that, even within the first factor, it’s not mandatory that a use be “transformative.” It is entirely possible that a court balancing all of the factors could, indeed, find that OpenAI is engaged in fair use, even if it does not agree that it is “transformative.”

Whether the use of copyrighted works to train an AI is “transformative” is certainly a novel question, but it is likely answered through an observation that the U.S. Supreme Court made in Campbell v. Acuff Rose Music:

[W]hat Sony said simply makes common sense: when a commercial use amounts to mere duplication of the entirety of an original, it clearly “supersede[s] the objects,”… of the original and serves as a market replacement for it, making it likely that cognizable market harm to the original will occur… But when, on the contrary, the second use is transformative, market substitution is at least less certain, and market harm may not be so readily inferred.

A key question, then, is whether training an AI on copyrighted works amounts to mere “duplication of the entirety of an original” or is sufficiently “transformative” to support a fair-use finding. Open AI, as noted above, believes its use is highly transformative. According to its comments:

Training of AI systems is clearly highly transformative. Works in training corpora were meant primarily for human consumption for their standalone entertainment value. The “object of the original creation,” in other words, is direct human consumption of the author’s ?expression.? Intermediate copying of works in training AI systems is, by contrast, “non-expressive” the copying helps computer programs learn the patterns inherent in human-generated media. The aim of this process—creation of a useful generative AI system—is quite different than the original object of human consumption. The output is different too: nobody looking to read a specific webpage contained in the corpus used to train an AI system can do so by studying the AI system or its outputs. The new purpose and expression are thus both highly transformative.

But the way that Open AI frames its system works against its interests in this argument. As noted above, and reinforced in the immediately preceding quote, an AI system like DALL-E or Stable Diffusion is actually made of at least two distinct pieces. The first is a piece of software that ingests existing works and creates a file that can serve as instructions to the second piece of software. The second piece of software then takes the output of the first part and can produce independent results. Thus, there is a clear discontinuity in the process, whereby the ultimate work created by the system is disconnected from the creative inputs used to train the software.

Therefore, contrary to what Open AI asserts, the protected works are indeed ingested into the first part of the system “for their standalone entertainment value.” That is to say, the software is learning what counts as “standalone entertainment value” and therefore, the works mustbe used in those terms.

Surely, a computer is not sitting on a couch and surfing for its own entertainment. But it is solely for the very “standalone entertainment value” that the first piece of software is being shown copyrighted material. By contrast, parody or “remixing” uses incorporate the work into some secondary expression that transforms the input. The way these systems work is to learn what makes a piece entertaining and then to discard that piece altogether. Moreover, this use of art qua art most certainly interferes with the existing market insofar as this use is in lieu of reaching a licensing agreement with rightsholders.

The 2nd U.S. Circuit Court of Appeals dealt with an analogous case. In American Geophysical Union v. Texaco, the 2nd Circuit considered whether Texaco’s photocopying of scientific articles produced by the plaintiffs qualified for a fair-use defense. Texaco employed between 400 and 500 research scientists and, as part of supporting their work, maintained subscriptions to a number of scientific journals. It was common practice for Texaco’s scientists to photocopy entire articles and save them in a file.

The plaintiffs sued for copyright infringement. Texaco asserted that photocopying by its scientists for the purposes of furthering scientific research—that is to train the scientists on the content of the journal articles—should count as a fair use, at least in part because it was sufficiently “transformative.” The 2nd Circuit disagreed:

The “transformative use” concept is pertinent to a court’s investigation under the first factor because it assesses the value generated by the secondary use and the means by which such value is generated. To the extent that the secondary use involves merely an untransformed duplication, the value generated by the secondary use is little or nothing more than the value that inheres in the original. Rather than making some contribution of new intellectual value and thereby fostering the advancement of the arts and sciences, an untransformed copy is likely to be used simply for the same intrinsic purpose as the original, thereby providing limited justification for a finding of fair use… (emphasis added).

As in the case at hand, the 2nd Circuit observed that making full copies of the scientific articles was solely for the consumption of the material itself. A rejoinder, of course, is that training these AI systems surely advances scientific research and, thus, does foster the “advancement of the arts and sciences.” But in American Geophysical Union, where the secondary use was explicitly for the creation of new and different scientific outputs, the court still held that making copies of one scientific article in order to learn and produce new scientific innovations did not count as “transformative.”

What this case represents is that one cannot merely state that some social goal will be advanced in the future by permitting an exception to copyright protection today. As the 2nd Circuit put it:

…the dominant purpose of the use is a systematic institutional policy of multiplying the available number of copies of pertinent copyrighted articles by circulating the journals among employed scientists for them to make copies, thereby serving the same purpose for which additional subscriptions are normally sold, or… for which photocopying licenses may be obtained.

The secondary use itself must be transformative and different. Where an AI system ingests copyrighted works, that use is simply not transformative; it is using the works in their original sense in order to train a system to be able to make other original works. As in American Geophysical Union, the AI creators are completely free to seek licenses from rightsholders in order to train their systems.

Finally, there is a sense in which this machine learning might not infringe on copyrights at all. To my knowledge, the technology does not itself exist, but if it were possible for a machine to somehow “see” in the way that humans do—without using stored copies of copyrighted works—merely “learning” from those works, such as we can call it learning, probably would not violate copyright laws.

Do the outputs of these systems violate intellectual property laws?

The outputs of GANs and diffusion models may or may not violate IP laws, but there is nothing inherent in the processes described above to dictate that they must. As noted, the most common AI systems do not save copies of existing works, but merely “instructions” (more or less) on how to create new works that conform to patterns they found by examining existing work. If we assume that a system isn’t violating copyright at the input stage, it’s entirely possible that it can produce completely new pieces of art that have never before existed and do not violate copyright.

They can, however, be made to violate IP rights. For example, trademark violations appear to be one of the most popular uses of these AI systems by end users. To take but one example, a quick search of Google Images for “midjourney iron man” returns a slew of images that almost certainly violate trademarks for the character Iron Man. Similarly, these systems can be instructed to generate art that is not just “in the style” of a particular artist, but that very closely resembles existing pieces. In this sense, the system would be making a copy that theoretically infringes.

There is a common bug in such systems that leads to outputs that are more likely to violate copyright in this way. Known as “overfitting,” the training leg of these AI systems can be presented with samples that contain too many instances of a particular image. This leads to a data set that contains too much information about the specific image, such that when the AI generates a new image, it is constrained to producing something very close to the original.

An argument can also be made that generating art “in the style of” a famous artist violates moral rights (in jurisdictions where such rights exist).

At least in the copyright space, cases like Sony are going to become crucial. Does the user side of these AI systems have substantial noninfringing uses? If so, the firms that host software for end users could avoid secondary-infringement liability, and the onus would fall on users to avoid violating copyright laws. At the same time, it seems plausible that legislatures could place some obligation on these providers to implement filters to mitigate infringement by end users.

Opportunities for New IP Commercialization with AI

There are a number of ways that AI systems may inexcusably infringe on intellectual-property rights. As a best practice, I would encourage the firms that operate these services to seek licenses from rightsholders. While this would surely be an expense, it also opens new opportunities for both sides to generate revenue.

For example, an AI firm could develop its own version of YouTube’s ContentID that allows creators to opt their work into training. For some well-known artists this could be negotiated with an upfront licensing fee. On the user-side, any artist who has opted in could then be selected as a “style” for the AI to emulate. When users generate an image, a royalty payment to the artist would be created. Creators would also have the option to remove their influence from the system if they so desired.

Undoubtedly, there are other ways to monetize the relationship between creators and the use of their work in AI systems. Ultimately, the firms that run these systems will not be able to simply wish away IP laws. There are going to be opportunities for creators and AI firms to both succeed, and the law should help to generate that result.