Here in New Jersey, where I live, the day before Halloween is commonly celebrated as “Mischief Night,” an evening of adolescent revelry and light vandalism that typically includes hurling copious quantities of eggs and toilet paper.

It is perhaps fitting, therefore, that President Joe Biden chose Oct. 30 to sign a sweeping executive order (EO) that could itself do quite a bit of mischief. And befitting the Halloween season, in proposing this broad oversight regime, the administration appears to be positively spooked by the development of artificial intelligence (AI).

The order, of course, embodies the emerging and now pervasive sense among policymakers that they should “do something” about AI; the EO goes so far as to declare that the administration feels “compelled” to act on AI. It largely directs various agencies to each determine how they should be involved in regulating AI, but some provisions go further than that. In particular, directives that set new reporting requirements—while ostensibly intended to forward the reasonable goal of transparency—could end up doing more harm than good.

Capacious Definitions that Could Swallow the Whole World of Software

One of the EO’s key directives would require companies that develop so-called “dual-use” AI models to report to the government about efforts to secure the weights—key parameters that shape outputs—that the models use. The directive would apply to “foundation” models—that is, large deep-learning neural networks trained on massive datasets to form the “base” model for more specific applications—that could be manipulated in ways that pose significant security threats (the “dual” use in question). The order would tag such models as “dual use” even if developers or providers attempt to set technical limits to mitigate potentially unsafe uses.

Among the obvious risks the order contemplates as potential security threats are models that could assist in developing chemical, biological, or nuclear weapons. But it also includes broader language regarding what amounts to a catch-all threat, namely that models could be deemed dual use to the extent that they “[permit] the evasion of human control or oversight through means of deception or obfuscation.”

By focusing on mutable systems that could potentially facilitate harm, the order’s definition casts a wide net that could sweep up many general-purpose AI models. Moreover, by categorizing systems that might circumvent human judgment as dual-use, the order effectively casts all AI as potentially hazardous.

For that matter, given the order’s capacious definition of “artificial intelligence” (“a machine-based system that can, for a given set of human-defined objectives, make predictions, recommendations, or decisions influencing real or virtual environments”), it’s possible that many software systems we wouldn’t think of as “AI” at all will get classified as “dual-use” models.

Restricting Open Research Has Unintended Consequences

One major downstream consequence of the order’s reporting requirements for dual-use systems is that it could inadvertently restrict open-source development. Companies experimenting with AI technologies—including many that may not think their work qualifies as dual use—would likely refrain from discussing their work publicly until it’s well into the development phase. Given the expense of complying with the reporting requirements, firms without a sufficiently viable revenue stream to support both development and legal compliance will have incentive to avoid regulatory oversight in the early stages, thereby chilling potential openness.

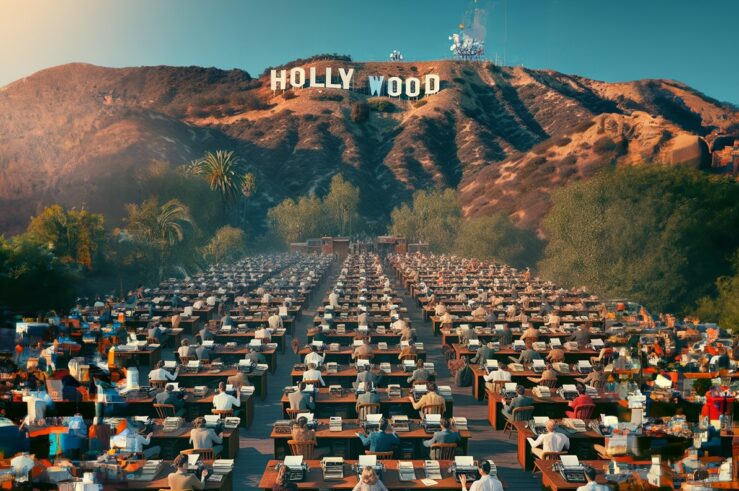

It’s hard to envision how fully open-source projects would be able to survive in this kind of environment. Open-source AI code libraries like TensorFlow and PyTorch drive incredible innovation by enabling any developer to build new applications atop state-of-the-art models. Why would the paradigmatic fledgling software developer operating out of someone’s garage seriously pursue open-source development if components like these are swept into the scope of the EO?

Unnecessarily guarding access to weights used by the models, not to mention refraining from open-source development altogether, could prevent independent researchers from making forward progress in pushing the state of the art in AI development.

For example, AI techniques hold incredible potential for advancement in various scientific fields (e.g., MRI images and deep learning to diagnose brain tumors; machine learning to advance materials science). What’s needed for this research is not restrictive secrecy, but openness—empowering more minds to build on AI advances.

The Risks of Treating AI as a National Security Matter

While the executive order does not specify how violations of the reporting requirements would be policed, given that it claims authority under powers granted by the Defense Production Act (DPA) and the International Emergency Economic Powers Act (IEEPA), any penalties for noncompliance would likewise have to stem from those underlying laws.

The DPA—which authorizes the president to require industry to prioritize and fulfill certain production orders during national emergencies—prescribes fines of up to $10,000 and imprisonment for up to a year for refusing such orders. The IEEPA—which allows the president to impose export controls, sanctions, and transaction screening in order to regulate international commerce during national emergencies—authorizes civil fines of up to $365,579 or criminal fines up to $1 million (along with up to 20 years imprisonment) for violators.

But actually enforcing these policies, which cast the United State as overly defensive about AI leadership, could easily backfire. Breakthroughs tend to be accelerated through global teamwork. By its very nature, scientific work often involves collaboration among researchers from around the world. By placing AI development under the umbrella of the U.S. national-security apparatus, the EO risks limiting international collaboration and isolating American scientists from valuable perspectives and partnerships.

Just as seat belts reduced accident costs but inadvertently increased reckless driving, mandated scrutiny of model weights, while intended to increase accountability, may inadvertently restrict the flow of innovative ideas that are needed to ensure the United States remains a world leader in AI development.

Striking the Right Balance

AI holds tremendous potential to improve our lives, as well as risks that require thoughtful oversight. Regulations born of fear therefore threaten to derail beneficial innovation. Policymakers must strike the right balance between safeguards and progress.

The EO, unfortunately, would essentially replay a critical episode in the history of encryption, but this time proposing an erroneous path that we only narrowly avoided the first time around.

With encryption—despite recognizing potential downsides—policymakers embraced an optimism that allowed breakthroughs like personal computers and the internet to thrive. The Biden administration, by contrast, focuses predominantly on hypothetical risks, versus AI’s immense potential benefits. And it does so via a preemptive approach poorly suited to a technology still in its infancy.

Mandating scrutiny of research can ensure discoveries aren’t misused. But onerous restrictions that discourage openness may do more harm than good. Measured transparency, balanced by liberal information sharing, will speed discoveries for social benefit.

Advocates for AI development and responsible governance want the same thing: the improvement of the human condition. But we differ on how to get there. The way forward requires evidence, not alarmism, lest regulations designed to protect have the unintended consequence of precluding the very progress we seek to responsibly foster.